by Tim Sommers

Unfortunately, you have a brain tumor. You don’t know it yet. Your doctor doesn’t know it yet. But you are beginning to have symptoms. The tumor is pressing on surrounding brain tissue and causing you develop a number of delusional beliefs. You believe you are the best swimmer in the world. You believe that dogs and cats are aliens. You believe that you invented the apostrophe. You also, as it happens, believe that you have a brain tumor.

Unfortunately, you have a brain tumor. You don’t know it yet. Your doctor doesn’t know it yet. But you are beginning to have symptoms. The tumor is pressing on surrounding brain tissue and causing you develop a number of delusional beliefs. You believe you are the best swimmer in the world. You believe that dogs and cats are aliens. You believe that you invented the apostrophe. You also, as it happens, believe that you have a brain tumor.

So, you have a brain tumor. You believe you have a brain tumor. And the cause of your believing that you have a brain tumor is the brain tumor that you have. So, when the doctor diagnosis you with a brain tumor, are you entitled to say, “I know, right!”?

If the belief that you have a brain tumor is caused by the same thing that causes you to believe you invented the apostrophe, I think most of us would say that you don’t, in fact, know that you have a brain tumor – even if you believe it and it’s true. But it’s difficult to see why. It has something to do, probably, with justification.

Implicitly or explicitly philosophers, epistemologists to be more specific, define knowledge as justified true belief. Knowledge, then, is a kind of belief. What kind? Well, of course, the belief has to be true to count as knowledge. But it also has to be justified. If you correctly guess what the weather will be like tomorrow, we shouldn’t say that you knew it. If you predict the weather accurately using instruments and satellite maps, then you may have had or have knowledge. The brain tumor example raises questions about how justification works. There’s something wrong with the connection the belief has to why you believe it. It echoes an even more famous puzzle – the Gettier problem.

The problem is best seen via a Gettier-style counterexample. Here’s one: Suppose you believe that you own a 2011 Cali Classic 125 Scooter that is light blue and has a Strand bookstore sticker on the back and is waiting for you outside your work in the parking lot. You believe this, in part, because you remember buying it, you remember putting that sticker on the back, and you remember driving it to work this morning. Unfortunately, unbeknownst to you it was stolen a couple of hours ago, broken down into pieces, and sold illegally as parts. But you also have a lottery ticket for a drawing in your pocket that you may well have forgotten about. Third prize in this hypothetical lottery is a 2011 Cali Classic 125 Scooter that is light blue and has a Strand bookstore sticker on the back. A couple of hours ago you won that third prize, the scooter was delivered to the parking lot outside your work, and this scooter is waiting for you right now. So, you believe you have a scooter meeting this description waiting for you in the parking lot. And you do. Plus, you seemed to be justified in believing that. But it still seems wrong to say that you know you have such a scooter.

Once you see the pattern you can easily generate as many Gettier-style counterexamples as you want. The question is, how should epistemologists deal with them? Many have thought that there is something missing from the standard account of knowledge as justified true belief.

Here’s one possibility. Knowledge is justified true belief with no undefeated defeaters. Defeaters are just spoilers – anything that undermines justification. You have to defeat all the potential defeaters to have knowledge. The most potent defeater of them all is global skepticism. You have heard some, probably many, versions of global skepticism.

Zhuangzi wrote over two thousand years ago: “Once upon a time, I, Chuang Chou, dreamt I was a butterfly, fluttering hither and thither, to all intents and purposes a butterfly. I was conscious only of my happiness as a butterfly, unaware that I was Chou. Soon I awaked, and there I was, veritably myself again. Now I do not know whether I was then a man dreaming I was a butterfly, or whether I am now a butterfly, dreaming I am a man.”

Over three hundred years ago Descartes worried over how we could rule out the possibility that the reality we see around us is actually a deception produced by an evil demon to fool us. The only way he found to dispel this possibility was to prove the existence of God (via the ontological argument), and then to assume that God would not allow us to be so deceived.

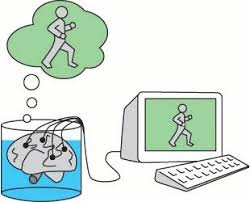

Forty years ago, Hillary Putnam wondered how we could be sure that we were not a brain in a vat. We appear to be moving about in a material world, but maybe we are really floating in a jar being stimulated by electrical wires to have experiences just like those we would have if we were moving about in the material world.

Twenty years ago, “The Matrix” introduced a mass audience to the idea that we are not brains, but instead whole bodies, in vats, living in a shared, virtual approximation of a world very much like ours. (When I saw “The Matrix” the first time, when they rescued Neo from his cocoon, I couldn’t help whispering furiously to my companions, “He’s a brain in a vat!”)

Most recently, Nick Bostrom has argued that not only might we be living in a computer simulation, but that, in fact, it is more likely that we live in a computer simulation than that we are living in a nonvirtual world. (He argues that since such a thing is possible, it’s likely that there are many, many such simulations and only one real world, ipso facto it is more likely that we are in one of the simulations than it is that we are in the one real world. He’s even proposed ways of testing whether this is reality or a computer simulation.)

Global skepticism is a problem then. If we can’t defeat it, then there is always at least one undefeated defeater (maybe many, depending on how we individuate the various skeptical possibilities). If we never have justified true beliefs with no undefeated defeaters, then we never know anything. So, how can we defeat global skepticism (without, for example, relying on the ontological argument for the existence of God, the way Descartes did)?

Here’s one possibility. Maybe, we can’t. Maybe global skepticism is just straight-up true. Not that we are brains in vats or code in a simulation, necessarily, but simply that the world is sufficiently different from what we think it is to rule out treating any of our beliefs as knowledge. Afterall, if we believe contemporary physics, the world is very different from what we think it is. For one thing, it’s not made of the kind of stuff we tend to think it is. It’s just fields. Relativized, quantum fields. Particles jump in and out of existence from the vacuum and what they do, and even what they are, is only governed by probabilities, there are no strict laws governing their existence, much less their behavior. Or, maybe, as some think the universe is constantly splitting into many separate universes. And, of course, Einstein showed a hundred years ago that the idea of a universal now, of simultaneity, is an illusion. So, reality isn’t very much like most people have thought it was for most of history – or what most people think it is like now.

On the other hand, maybe, we are thinking about this in the wrong way. Donald Davidson has an anti-skeptical argument that suggests that most of what we believe has to be true. The skeptic says, I don’t have knowledge about the world or, at least, all of my beliefs about the world may well be false. But having one belief necessarily involves having lots of beliefs. And beliefs require a language. Having a language implies the ability to interpret language. For reasons too technical to get into here (See, “Ontological Relativity Turns 50”, 3 Quarks Daily 5/20/19), in order to interpret a language, most of the speaker’s beliefs must be true and most of the interpreter’s beliefs must be true. Therefore, either there are no beliefs at all or most of my beliefs are true. How can we square that with, for example, the thought that we live in a computer simulation?

Here’s one belief I have. I don’t believe I live in a computer simulation. Here are some other beliefs I have. Phil is a friend of mine. Coco Rocco is the best place to eat in Brooklyn. Iowa City has a lot of great public readings. Epistemology is the study of justified true beliefs. If those were all of my beliefs, then even if I do live in a computer simulation, most of my beliefs are true. I still might know where to catch the bus, in other words, even if buses are just relativized, quantum fields with only a probabilistic existence or the stimulation of certain parts of my brain – or something like that. I can be wrong about many things, even big things, but still be mostly right.

But this seems to prove too much. Davidson’s argument makes everyone’s beliefs, including Donald Trump’s, mostly true. That can’t be right, can it?

But it seems like either I don’t know anything or most of what I believe is true.