“We the people, in order to form a more perfect union.”

“We the people, in order to form a more perfect union.”

Two hundred and twenty one years ago, in a hall that still stands across the street, a group of men gathered and, with these simple words, launched America’s improbable experiment in democracy. Farmers and scholars; statesmen and patriots who had traveled across an ocean to escape tyranny and persecution finally made real their declaration of independence at a Philadelphia convention that lasted through the spring of 1787.

The document they produced was eventually signed but ultimately unfinished. It was stained by this nation’s original sin of slavery, a question that divided the colonies and brought the convention to a stalemate until the founders chose to allow the slave trade to continue for at least twenty more years, and to leave any final resolution to future generations.

Of course, the answer to the slavery question was already embedded within our Constitution – a Constitution that had at its very core the ideal of equal citizenship under the law; a Constitution that promised its people liberty, and justice, and a union that could be and should be perfected over time.

And yet words on a parchment would not be enough to deliver slaves from bondage, or provide men and women of every color and creed their full rights and obligations as citizens of the United States. What would be needed were Americans in successive generations who were willing to do their part – through protests and struggle, on the streets and in the courts, through a civil war and civil disobedience and always at great risk – to narrow that gap between the promise of our ideals and the reality of their time.

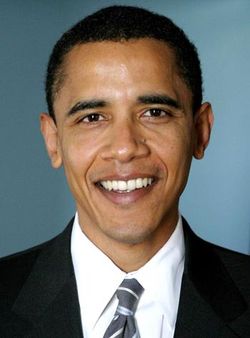

This was one of the tasks we set forth at the beginning of this campaign – to continue the long march of those who came before us, a march for a more just, more equal, more free, more caring and more prosperous America. I chose to run for the presidency at this moment in history because I believe deeply that we cannot solve the challenges of our time unless we solve them together – unless we perfect our union by understanding that we may have different stories, but we hold common hopes; that we may not look the same and we may not have come from the same place, but we all want to move in the same direction – towards a better future for our children and our grandchildren.

This belief comes from my unyielding faith in the decency and generosity of the American people. But it also comes from my own American story.

I am the son of a black man from Kenya and a white woman from Kansas. I was raised with the help of a white grandfather who survived a Depression to serve in Patton’s Army during World War II and a white grandmother who worked on a bomber assembly line at Fort Leavenworth while he was overseas. I’ve gone to some of the best schools in America and lived in one of the world’s poorest nations. I am married to a black American who carries within her the blood of slaves and slaveowners – an inheritance we pass on to our two precious daughters. I have brothers, sisters, nieces, nephews, uncles and cousins, of every race and every hue, scattered across three continents, and for as long as I live, I will never forget that in no other country on Earth is my story even possible.

It’s a story that hasn’t made me the most conventional candidate. But it is a story that has seared into my genetic makeup the idea that this nation is more than the sum of its parts – that out of many, we are truly one.

The caricature of a philosopher is of an otherworldly professor sitting in a comfy armchair in an Oxbridge college, speculating on the nature of reality using only his or her intellect and a few books. This has some basis in reality. Chemistry requires test tubes, history needs documents. In recent years, the main tool of the philosopher has been grey matter. The subject’s evolution can be painfully slow, tiptoeing forward from footnote to footnote. But not always. Every so often a new movement overturns the orthodoxies of received opinion. We might just be entering one of those phases.