by Daniel Gauss

Standing at Raj Ghat, the memorial for Gandhi in New Delhi, near where his corpse was cremated, I began to think about a problem I’ve been grappling with for a long time. With all the good, kind-hearted and sincere people in the world, why is the world not becoming a substantially more humane place?

I am surrounded by incredibly sweet people. I’m often deeply moved, and genuinely amazed, by how generous and compassionate they are, and the lengths they go to in order to be helpful. If you were to judge the world solely by these folks, you would think it to be a gentle, caring place. Comedian Patton Oswalt wrote (after the Boston Marathon bombing), in regard to those causing harm in the world, “The good outnumber you, and we always will.” So, then, why are the good people losing?

It’s pretty clear that the world is not a gentle, caring place. There are at least 50 state-based armed conflicts right now, corruption and duplicity thrive, greed runs unquestioned and unchecked and our climate is deteriorating. In the USA our prisons are full, children struggle to read, income inequality is outrageous and people are barely scraping by paycheck to paycheck. We are in another war. Many of our cities are still racially segregated and class divisions cause unjust treatment and disparate life opportunities and outcomes. Our cities are filled with homeless. The news seems like an unending sequence of cruelty and incompetence.

Standing in silence before the eternal flame at Raj Ghat, reflecting on all that this man did, I felt that his determined effort not only to become more humane, but also to challenge the larger systems that produce suffering, provided the beginning of an answer for me.

After visiting Raj Ghat, and wandering through the nearby Gandhi museum, which traces his life from infancy to death, my big theory now involves what might be called “micro-morality” and “macro-morality.” I think most people shoot for and are largely satisfied with micro-morality…politeness, kindness, volunteering, controlling their temper, forgiving, being nice.

Gandhi demonstrated that micro-morality is essential, but not good enough. Read more »

On method

On method

The only time I met Rex Reed, I was about seven years old. I went with my dad to Reed’s apartment in The Dakota on Central Park West so he could offer an estimate on painting the place. My father ran a very small general contracting business called Ken’s Home Improvements. Typical jobs involved him and one or two other workers. His theory on acquiring customers was to work for rich people since they had money; economies of scale were anathema to his soul. Reed qualified. A film and cultural critic for the New York Times, GQ, and Vogue, he’d been a judge for both the Berlin and Venice International Film Festivals by the time my little feet traipsed across his hardwood floors in the famous 19th century building with custom apartments and famous residents such as John and Yoko, and

The only time I met Rex Reed, I was about seven years old. I went with my dad to Reed’s apartment in The Dakota on Central Park West so he could offer an estimate on painting the place. My father ran a very small general contracting business called Ken’s Home Improvements. Typical jobs involved him and one or two other workers. His theory on acquiring customers was to work for rich people since they had money; economies of scale were anathema to his soul. Reed qualified. A film and cultural critic for the New York Times, GQ, and Vogue, he’d been a judge for both the Berlin and Venice International Film Festivals by the time my little feet traipsed across his hardwood floors in the famous 19th century building with custom apartments and famous residents such as John and Yoko, and  California’s primary is in about two weeks, and it’s a mess. The panic is slightly subsiding, though, since Democrats have started polling in one of the top two spots in the race for governor. For months, Republicans were polling first and second, with eight Democrats trailing because they split the vote. The California Democratic Party chair even urged low-polling candidates to drop out so as not to be spoilers.

California’s primary is in about two weeks, and it’s a mess. The panic is slightly subsiding, though, since Democrats have started polling in one of the top two spots in the race for governor. For months, Republicans were polling first and second, with eight Democrats trailing because they split the vote. The California Democratic Party chair even urged low-polling candidates to drop out so as not to be spoilers.

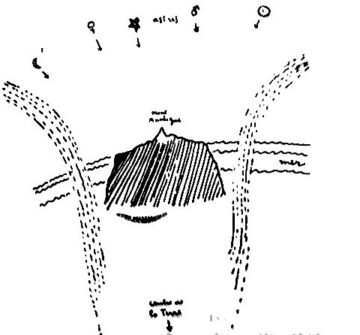

David Hammons. Untitled, Ca. 1990.

David Hammons. Untitled, Ca. 1990.